How Conversational AI Changes Web Accessibility

Conversational AI is transforming web accessibility by making digital spaces easier to navigate for people with disabilities. Instead of relying on traditional assistive tools like screen readers, users can now interact with websites using voice commands, text, and even visual inputs. This shift simplifies complex tasks, reduces cognitive load, and offers more personalized experiences.

Key Takeaways:

- Voice Navigation: Users can control websites through natural dialogue, bypassing the need for physical tools like keyboards or mice.

- Real-Time Text-to-Speech (TTS): Converts written content into audio, benefiting users with visual impairments or reading difficulties.

- Error Assistance: Provides real-time guidance for navigating complex layouts and completing forms.

- AI-Driven Tools: Platforms like TTSBuddy offer free, multilingual TTS services and conversational interfaces for smoother web interactions.

Studies show these tools improve task success rates and reduce frustration, especially for those with cognitive or motor disabilities. With advancements like interactive audio descriptions and multimodal integration, Conversational AI is reshaping how users engage with digital content, making websites more functional for everyone.

How Conversational AI Improves Accessibility

Voice-Based Website Navigation

Voice commands are changing how people interact with websites, offering a way to navigate through natural dialogue instead of relying on the traditional point-and-click method [2].

In early 2023, researchers at Politecnico di Milano worked on a co-design study with five participants who had dysarthria and motor impairments. They combined the CapisciAMe speech recognition engine with the ConWeb platform, enabling users to explore the "TecnologicaMente InSuperAbili" website using straightforward voice commands paired with visual labels. This integration allowed individuals with severe motor limitations to access complex website layouts that would have otherwise been out of reach.

However, standard voice assistants like those from Google, IBM, and Microsoft often struggle with high word error rates - up to 80-90% - when processing speech from individuals with severe dysarthria. To tackle this, the CapisciAMe project developed a specialized dataset with 54,000 recordings from 170 native speakers with speech disorders. As one participant, P4, noted:

"The use of 'go on' when already reading a text is quite stressful... sticking to digits instead of words... is far better; otherwise, selecting elements in complex [layout] pages would be extremely confusing."

Advanced tools like Screen Reader AI take this further by integrating the Document Object Model (DOM) and Accessibility Object Model (AOM) to create live semantic scene graphs. This technology helps the AI understand relationships between interface components, reshaping how users with disabilities interact with digital platforms [2]. Beyond navigation, these systems enhance experiences by providing real-time audio conversion.

Real-Time Text-to-Speech Conversion

Text-to-speech (TTS) technology is another game-changer, converting written content into natural-sounding audio. This feature makes websites more accessible for people with visual impairments, dyslexia, or those who prefer listening while multitasking. Today's AI-powered TTS systems can handle over 60 languages and offer more than 1,000 voice options.

In 2020, the BBC introduced an AI-driven synthetic voice on BBC.com after discovering that 62% of its audience spent up to four hours daily listening to podcasts instead of reading online content. This move not only improved accessibility but also boosted user engagement. Following this success, publishers like Forbes and The Guardian adopted similar TTS features to better serve visually impaired users and meet ADA and WCAG compliance standards.

Platforms like TTSBuddy offer free TTS services in nine languages with over 50 voice options, allowing users to convert any webpage into downloadable, natural-sounding audio. Features like real-time text highlighting, where audio syncs with visual text, are particularly helpful for individuals with ADHD or those learning a new language. Users can also adjust playback speed (up to 4.5x faster), pitch, and voice profiles to meet their sensory preferences.

Real-Time Assistance and Error Correction

Conversational AI extends beyond voice commands and TTS by offering real-time guidance for navigating complex website layouts. Instead of leaving users to figure out intricate interfaces on their own, these systems provide adaptive task support. Using live semantic scene graphs, they can respond to user queries instantly and guide them step by step [2].

For individuals with cognitive disabilities like dyslexia, autism, or ADHD, this kind of assistance is transformative. The AI can break down multi-step tasks into smaller, more manageable steps and even predict what users might need for interactive elements like forms or multimedia [2]. As independent researcher Rushilkumar Patel explains:

"Initial findings suggest that conversational interaction can decrease cognitive load by reducing repetitive commands and streamlining information retrieval." [2]

These systems also proactively notify users about changes in dynamic content, ensuring those with cognitive processing delays stay informed [2]. By combining voice-based AI with visual overlays that highlight which commands trigger specific actions, navigation becomes more intuitive and errors are reduced.

This technology helps websites align with Web Content Accessibility Guidelines (WCAG), making content more perceivable and understandable for users who cannot rely solely on visual cues.

AI and Web Accessibility - A conversation with a Blind Person

Research Findings: Conversational AI's Impact on Accessibility

Studies on AI Accessibility Tools

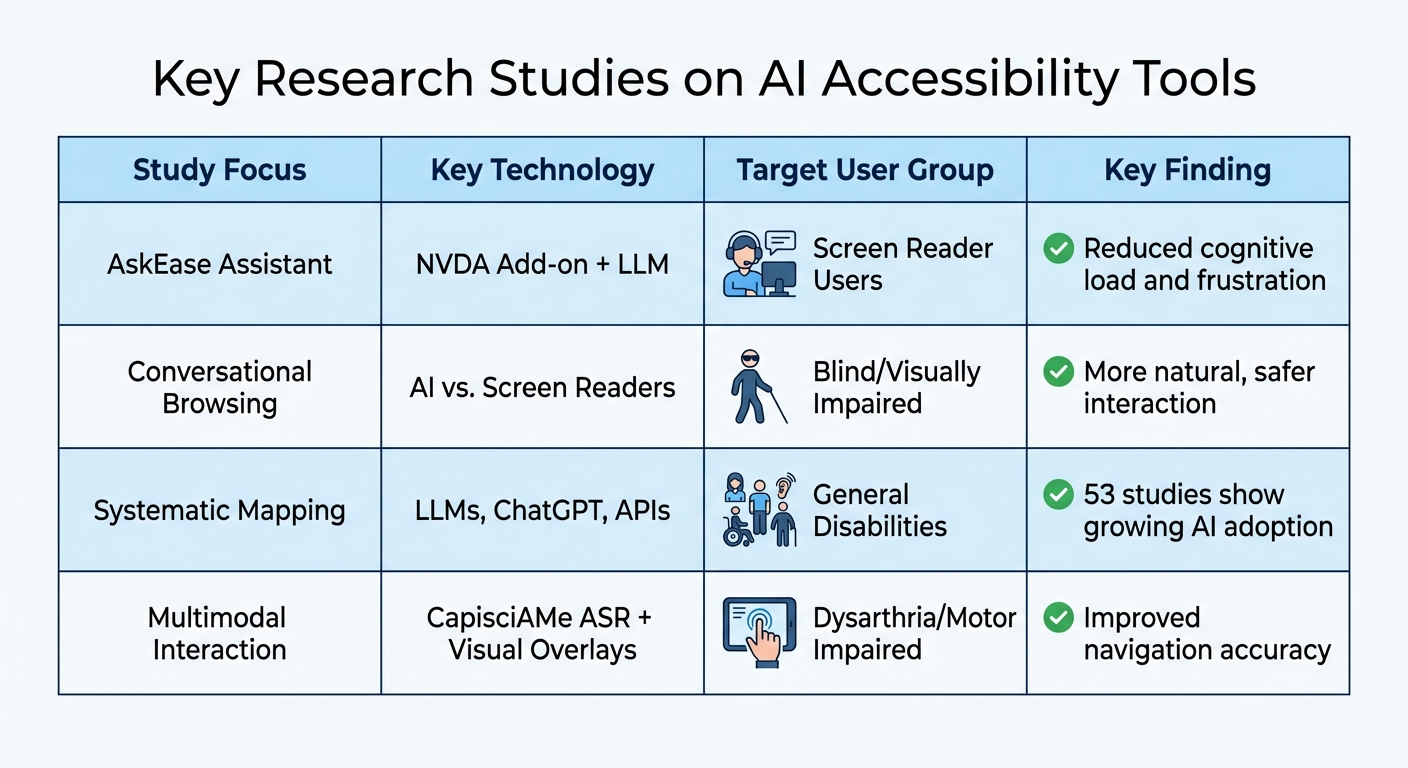

A study from 2026 reviewed 53 papers and revealed that AI tools are now capable of generating accessible HTML, creating alternative text, and supporting WCAG compliance. These advancements build on earlier discussions about how technology is shaping more accessible web interactions.

In January 2026, Microsoft Research introduced AskEase, an AI assistant designed as an add-on for NVDA screen readers. A within-subjects study with 12 participants showed that AskEase provided context-aware, step-by-step guidance, which improved task success rates while reducing physical demand, effort, and frustration. As Nan Chen from Microsoft Research explained:

"AskEase significantly improved task success while reducing perceived workload, including physical demand, effort, and frustration."

Another experimental study from October 2025 involved 30 blind and visually impaired participants. This research compared AI-driven browsing tools with traditional screen readers, emphasizing a shift toward human-centered AI. By prioritizing user trust and safety over full autonomy, these tools offered a more inclusive, natural-language approach to navigation.

Here's a summary of key studies in this field:

| Study Focus | Key Technology | Target User Group | Key Finding |

|---|---|---|---|

| AskEase Assistant | NVDA Add-on + LLM | Screen Reader Users | Reduced cognitive load and frustration |

| Conversational Browsing | AI vs. Screen Readers | Blind/Visually Impaired | More natural, safer interaction |

| Systematic Mapping | LLMs, ChatGPT, APIs | General Disabilities | 53 studies show growing AI adoption |

| Multimodal Interaction | CapisciAMe ASR + Visual Overlays | Dysarthria/Motor Impaired | Improved navigation accuracy |

User Feedback on AI Accessibility Solutions

In addition to these studies, user feedback provides valuable insights into the effectiveness of AI accessibility solutions. Between September 2022 and January 2023, researchers from Politecnico di Milano and the University of Bari conducted a co-design study with five participants living with dysarthria. The project integrated a specialized ASR engine with the ConWeb platform, and participants found that assigning navigation commands to digits (1-10) was more effective than using standard conversational commands.

Participants also pointed out the limitations of mainstream voice assistants, such as short listening windows and challenges in recognizing atypical speech patterns. They noted that numbered navigation simplified selecting page elements. Interestingly, mainstream speech recognition platforms showed error rates as high as 80-90% when processing speech from individuals with severe dysarthria. These findings underscore the need for tailored ASR models to meet diverse accessibility requirements effectively.

TTSBuddy: Conversational AI for Accessibility

TTSBuddy Features

TTSBuddy combines conversational AI with accessibility tools to tackle challenges faced by many users. Its Chrome extension includes natural language voice chat for smoother navigation and transforms documents into interactive conversations, acting as what researchers call a "Summarization Agent". This feature simplifies complex documents into easy-to-understand information, which can be particularly helpful for individuals with dyslexia, reading disabilities, or cognitive difficulties.

The platform supports text-to-speech in more than 9 languages with over 50 voice options, helping non-native speakers by improving pronunciation and breaking down language barriers. Additionally, users can download audio for offline use, ensuring they have access to content even without an internet connection.

These tools make content easier to consume, reducing dependence on visual elements and offering a more interactive experience.

How TTSBuddy Supports Accessibility

TTSBuddy's advanced features provide auditory guidance, making it easier for blind or low-vision users to navigate digital spaces effectively. Its conversational interface delivers quicker answers compared to traditional static menus, removing obstacles in the browsing process. This aligns with research showing that reducing cognitive strain and enabling natural interactions can significantly improve user experiences. Modern AI-driven text-to-speech technology also produces speech with natural intonation, expressive emotion, and adaptive pronunciation, moving far beyond the robotic voices of earlier tools.

The platform is designed for multitasking, allowing users to interact with content while performing other activities, making digital engagement more convenient. By offering its services for free, TTSBuddy removes financial barriers, ensuring that assistive technology is accessible to everyone who needs it to navigate the web independently.

The Future of Conversational AI in Web Accessibility

Personalization and Multimodal Integration

Web accessibility is shifting from fixed designs to dynamic systems that adapt to individual user needs in real time. These systems can adjust elements like font size, contrast, and navigation based on how users interact with them, offering a more tailored experience.

A key development in this area includes "Orchestrator Agents." These agents assign tasks to specialized sub-agents - for instance, one might simplify a document while another modifies visual settings - ensuring the system maintains context across various activities. By integrating voice, vision, and text, these technologies create interactive experiences. Imagine real-time audio descriptions of live video that users can pause and even query for details.

In February 2026, Google Research introduced the Multimodal Agent Video Player (MAVP), a prototype that turns static audio descriptions into interactive dialogues. Users can ask questions like, "What is the character wearing?" and receive detailed answers. To ensure privacy, advancements in personalization increasingly rely on local device processing and federated learning.

These innovations highlight how conversational AI is shaping the future of web accessibility, enabling smoother, more inclusive interactions across digital platforms.

Accessibility Across More Platforms

The reach of accessibility features is expanding, moving beyond traditional computers to include mobile devices, vehicles, wearables, and XR platforms. This ensures users experience consistent accessibility, regardless of the device or context.

For example, in February 2026, Google Research unveiled StreetReaderAI, a virtual assistant designed for blind and low-vision users. By combining an "AI Describer" for visual analysis with an "AI Chat" that retains location memory, the tool allows users to ask questions like, "Where was that bus stop?" and receive precise guidance based on nearby landmarks. As David Cespedes, QA Lead at Octahedroid, remarked:

"I think that in the near future we will start to think about accessibility not only for humans but also for artificial intelligence agents."

To make this vision a reality, web content must be structured with semantic HTML and proper ARIA labels. These elements are essential for AI agents to accurately interpret and relay information. Yet, as of 2023, a staggering 98.1% of the top one million website home pages failed to meet basic WCAG 2 accessibility standards. Bridging this gap is crucial as conversational AI becomes more integrated into everyday life.

The "curb-cut effect" serves as a reminder of how accessibility advancements benefit everyone. For instance, voice interfaces initially created for blind users now assist busy professionals, multitasking parents, and anyone who finds speaking more convenient than typing.

Conclusion: How Conversational AI Transforms Accessibility

Conversational AI has reshaped how individuals with disabilities interact with the web. Instead of being restricted to rigid, command-based navigation, users can now engage in natural-language conversations. This allows them to ask questions, clarify doubts, and explore connections between interface elements, making navigation easier and reducing mental strain.

Today's AI systems go a step further by integrating visual reasoning with the Document Object Model (DOM). This enables the creation of live semantic scene graphs, which provide detailed interpretations of dynamic content. The goal? To adapt technology to individual needs, rather than forcing users into fixed interaction patterns. A great example of this is TTSBuddy, which brings these advancements to life.

TTSBuddy - Built for people with accessibility needs offers free tools designed for inclusivity. Features include conversational webpage chat, natural-sounding audio available in over 9 languages with more than 50 voice options, and offline audio access. By staying free of charge, it ensures accessibility isn't hidden behind a paywall. With approximately 1.3 billion people worldwide living with disabilities, tools like TTSBuddy are crucial for making web content more accessible through voice and audio.

The future is already unfolding. AI now adapts interfaces in real time, combining voice, vision, and text to create a more inclusive digital landscape. As Rosa Lopez, Front-end Developer at Octahedroid, aptly puts it:

"Accessibility will become something essential like a QA process or web security."

This shift is transforming the web, making it more inclusive through natural dialogue and universal access. The possibilities are endless, and the journey has just begun.

FAQs

Do I still need a screen reader if I use conversational AI?

Yes, screen readers remain essential even with the rise of conversational AI. While AI chatbots can be helpful, they often come with accessibility challenges like focus management issues and limited compatibility with screen readers. This makes traditional assistive tools critical for ensuring full web accessibility.

How can websites support voice navigation without breaking WCAG?

Websites can integrate voice navigation in a way that aligns with WCAG by adding accessible voice command features. These features should work smoothly alongside assistive technologies like screen readers. It's also essential that voice navigation doesn't interfere with keyboard navigation or visual elements, ensuring the site remains accessible to all users.

What data does conversational AI need to personalize accessibility?

Conversational AI adjusts its responses based on user preferences, past interactions, and specific accessibility requirements. For instance, recognizing the obstacles visually impaired users encounter allows for the design of more inclusive experiences. By leveraging a mix of data - like user profiles, context, and behavior patterns - AI can respond dynamically, making web content both accessible and tailored to meet the needs of a wide range of users.